Solo Builder

The real difficulty is always one step further than the one you see

0

commits

0

repos

0

days

I'm a PM, not a dev. I know my product domain. I just need a tech stack… That's what I thought.

▼ scroll

Previously in the Lyra talks

What we covered

Claude Code

The tooling: 17 skills, 9 agents, a full workflow from plan to deploy.

Dev Process

Tiers, phases, human gates. How to structure work with AI agents.

The Lyra Story

How a side-project became a personal intelligence engine.

The Technical Build

545 commits in 24 days. Hub-and-spoke, asyncio, hexagonal, streaming.

Product Decisions

Pivots, kill darlings, iteration speed. 52 days from first line to first user.

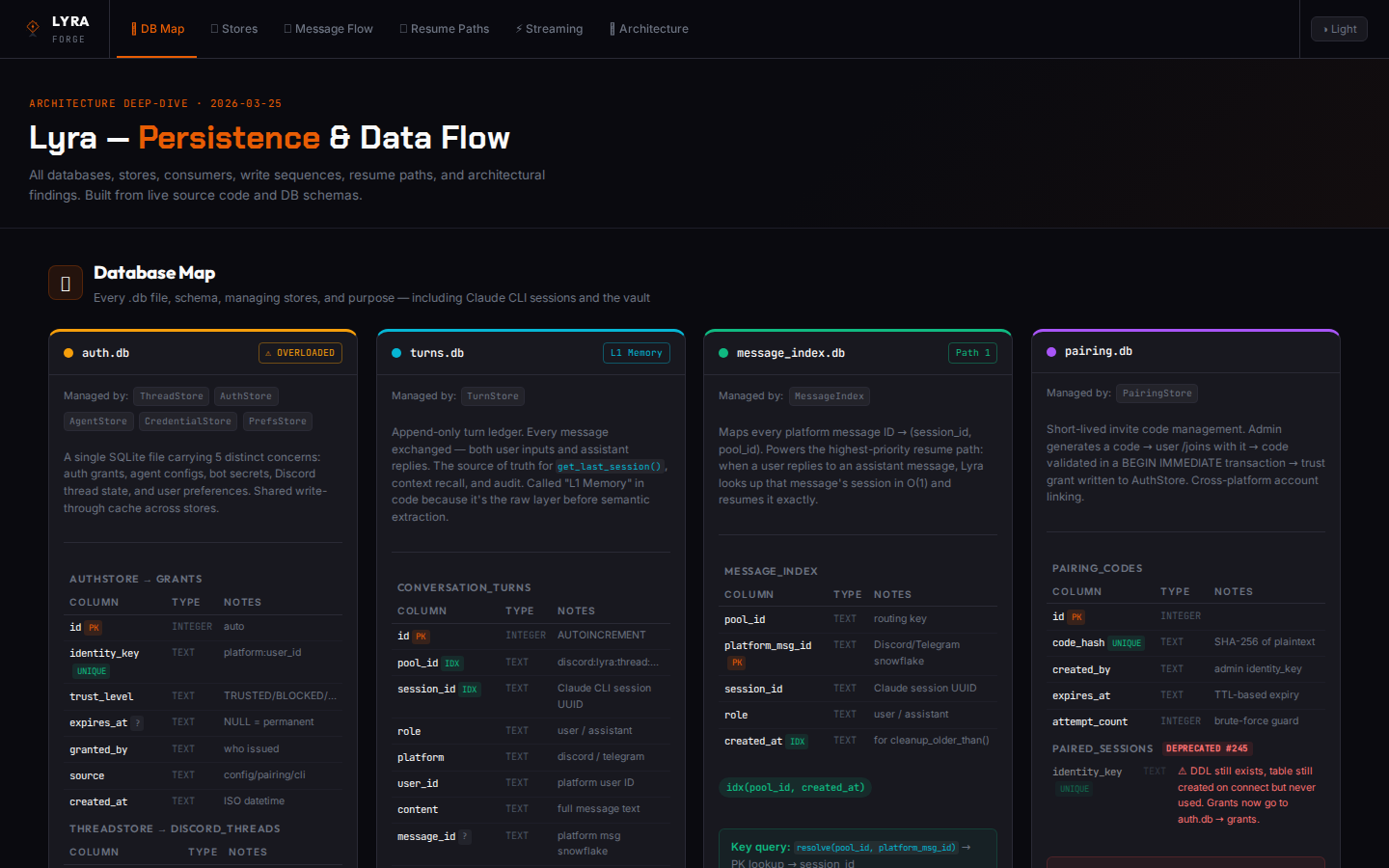

Lyra Architecture

Multi-agent, multi-channel, voice pipeline, memory. The platform under the hood.

Those talks told the what and the how. This one tells the journey — the real difficulties, the order they come in, and what we'd do differently.

"The hard part is the tech stack"

January. 1,200 commits on a boilerplate. You pick TanStack Start + NestJS + Drizzle + Better Auth. You think you've done the hard part. Then reality hits.

TanStack Start

React + Vinxi

NestJS

Fastify + Drizzle

Better Auth

RBAC + Multi-tenant

Paraglide

Compile-time i18n

shadcn/ui

Design system

GitHub Actions

CI/CD + Auto-merge

Vercel + Neon

Preview deploys

GDPR + Cookies

Consent management

Fumadocs

Documentation app

Playwright

E2E testing

Admin Panel

Users, orgs, audit

API Keys

Public API v1

Just the boilerplate. Not the product.

The tech stack is 20% choosing and 80% plumbing. But that's not where you'll struggle.

"The hard part is the tooling"

The tools you've used for years can't keep up. Linear, Jira, Confluence — incompatible with 50 commits per day. You migrate. Then you build.

Project bootstrap

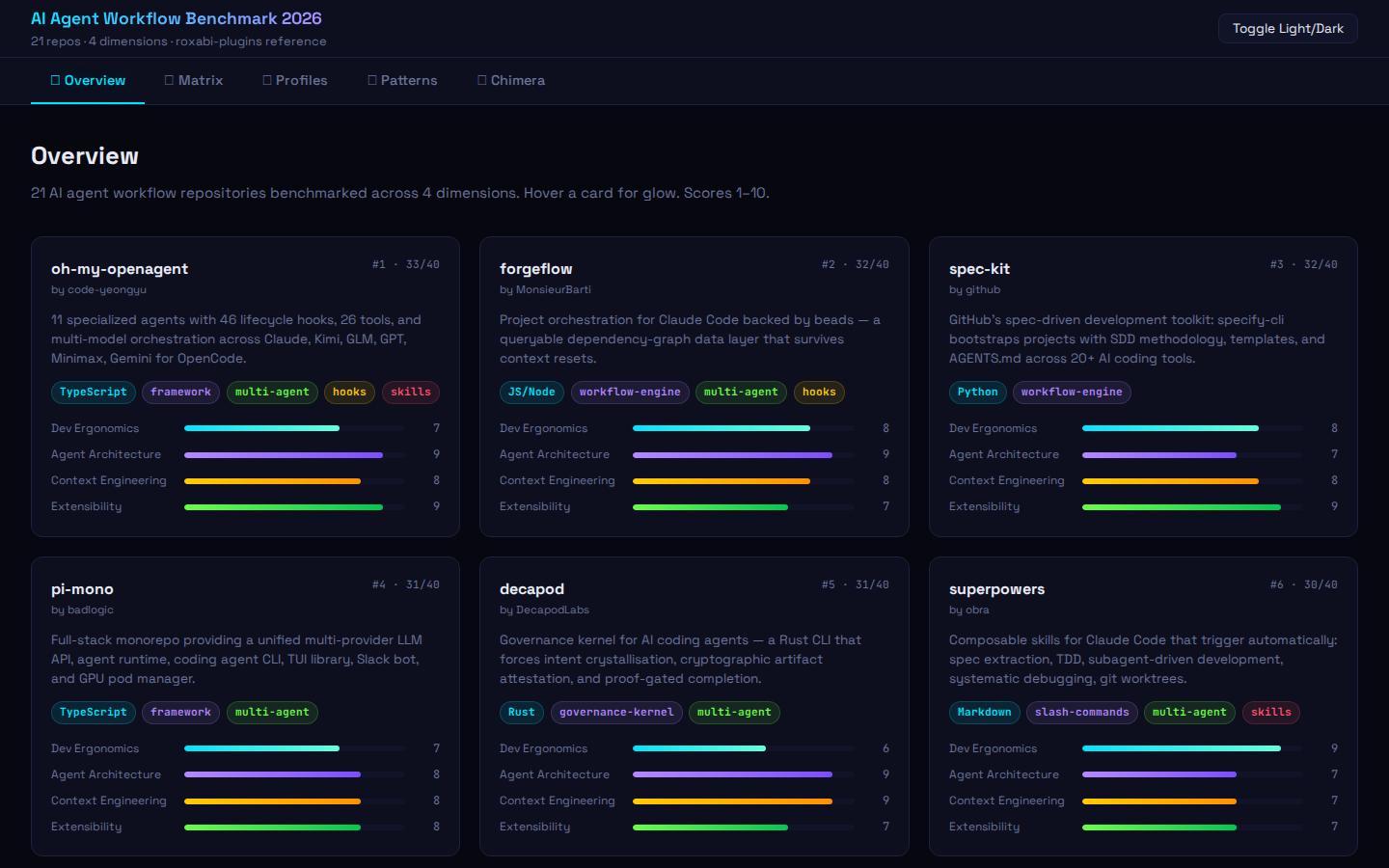

roxabi-pluginsExtensions for Lyra & Claude

Skills for Lyra & Claude

Content generation

You're no longer developing a product. You're maintaining an ecosystem. And the tooling has become a project of its own.

What did we actually build?

Two tools that changed the way every project starts and gets explained.

$ /init

Bootstrapping project…

✓ Project ready — 9 steps, zero manual config

Plugin sync

$ rsync plugins → ~/.claude/

Update & synchronize skills across all Claude caches

Service management

To come?!

Vercel portless — deploy without leaving the terminal

You don't build tools because you want to. You build them because the alternative is doing the same thing by hand, every day, across 20 repos.

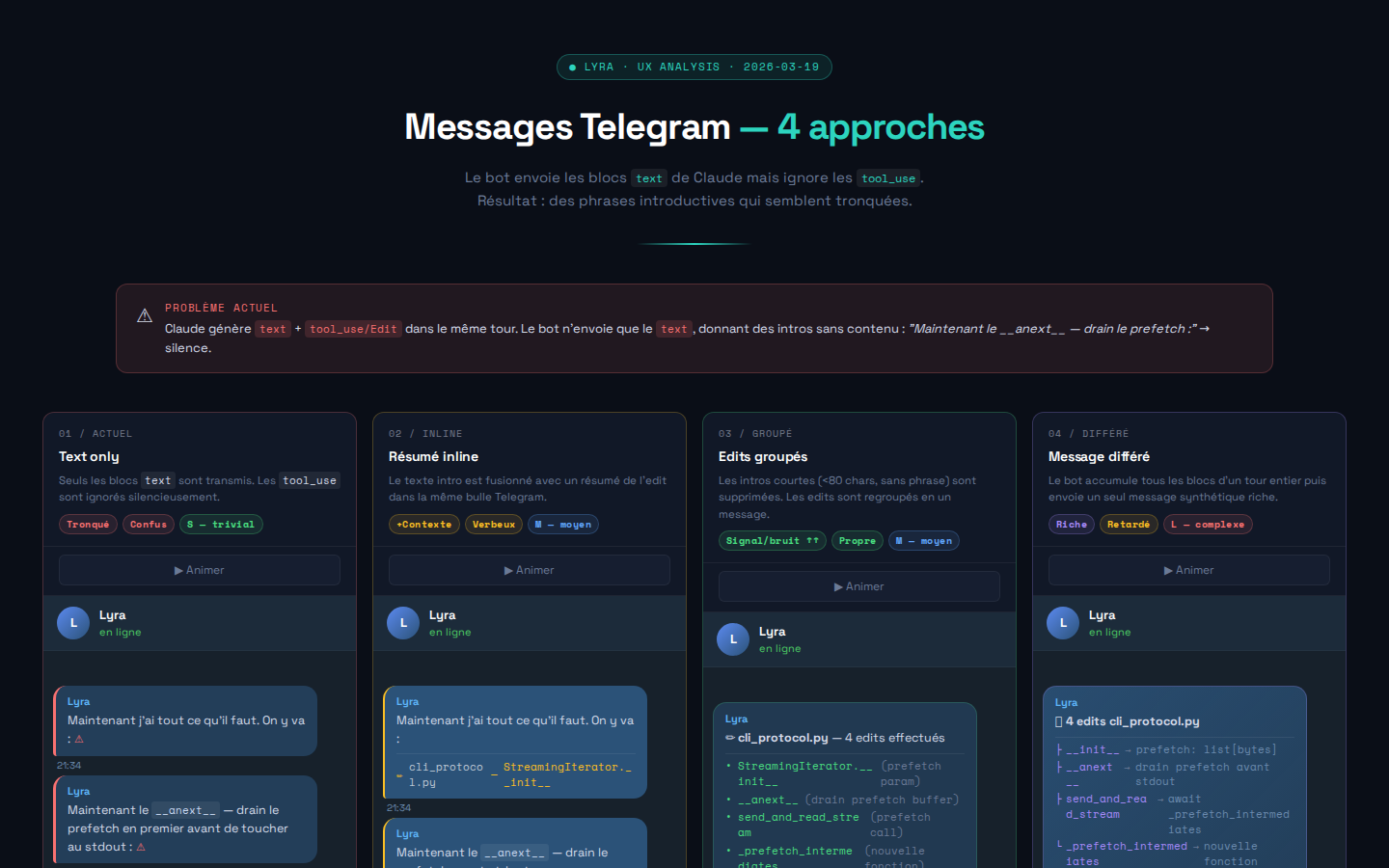

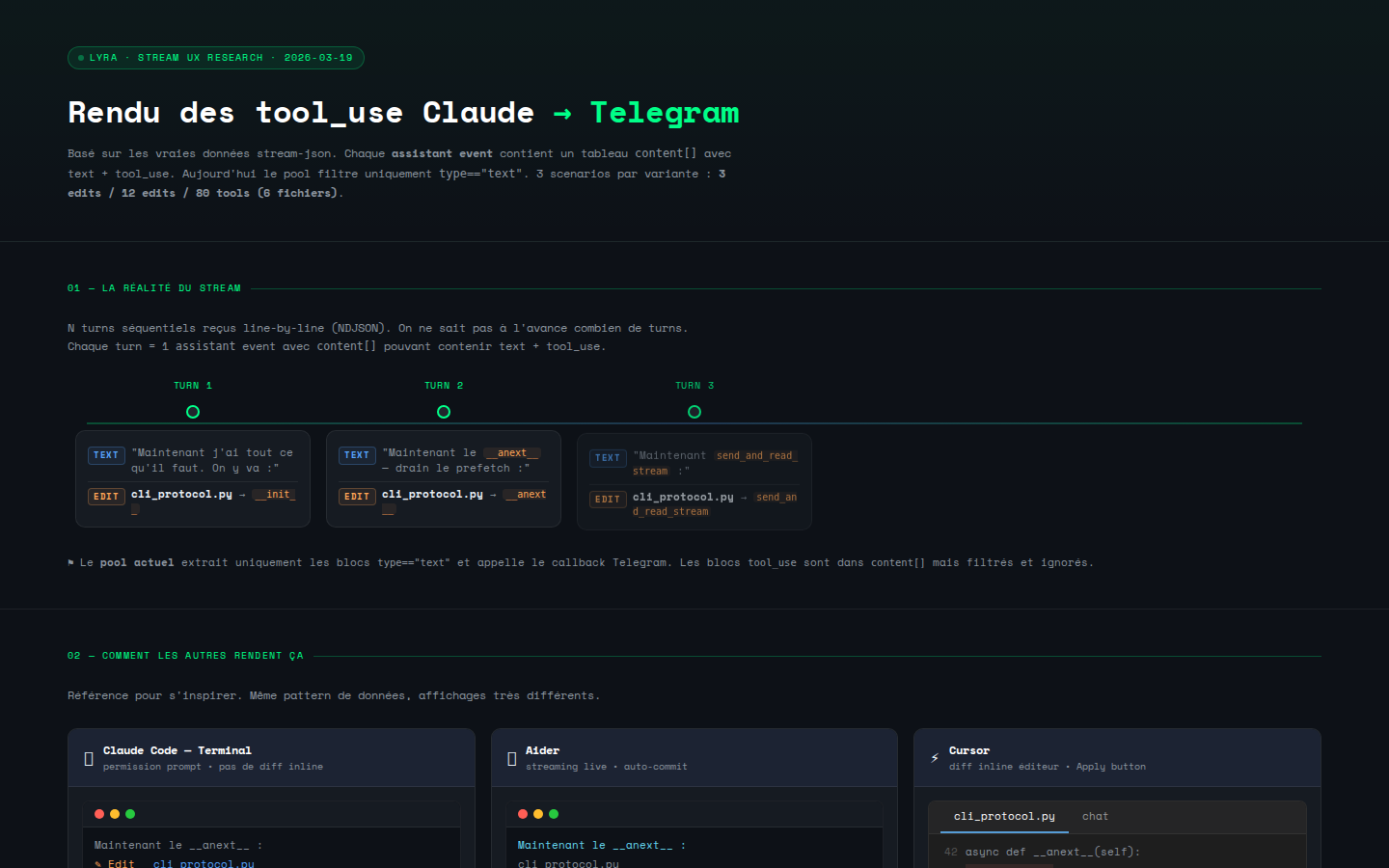

"The hard part is keeping up with the speed"

Lines changed per week

The two-track workflow

For the AI agent

analysis.md

spec.md

plan.md

For you, the decision-maker

Interactive HTML

Diagrams, dashboards, comparisons

Decision

GO / NO-GO

Rprod.

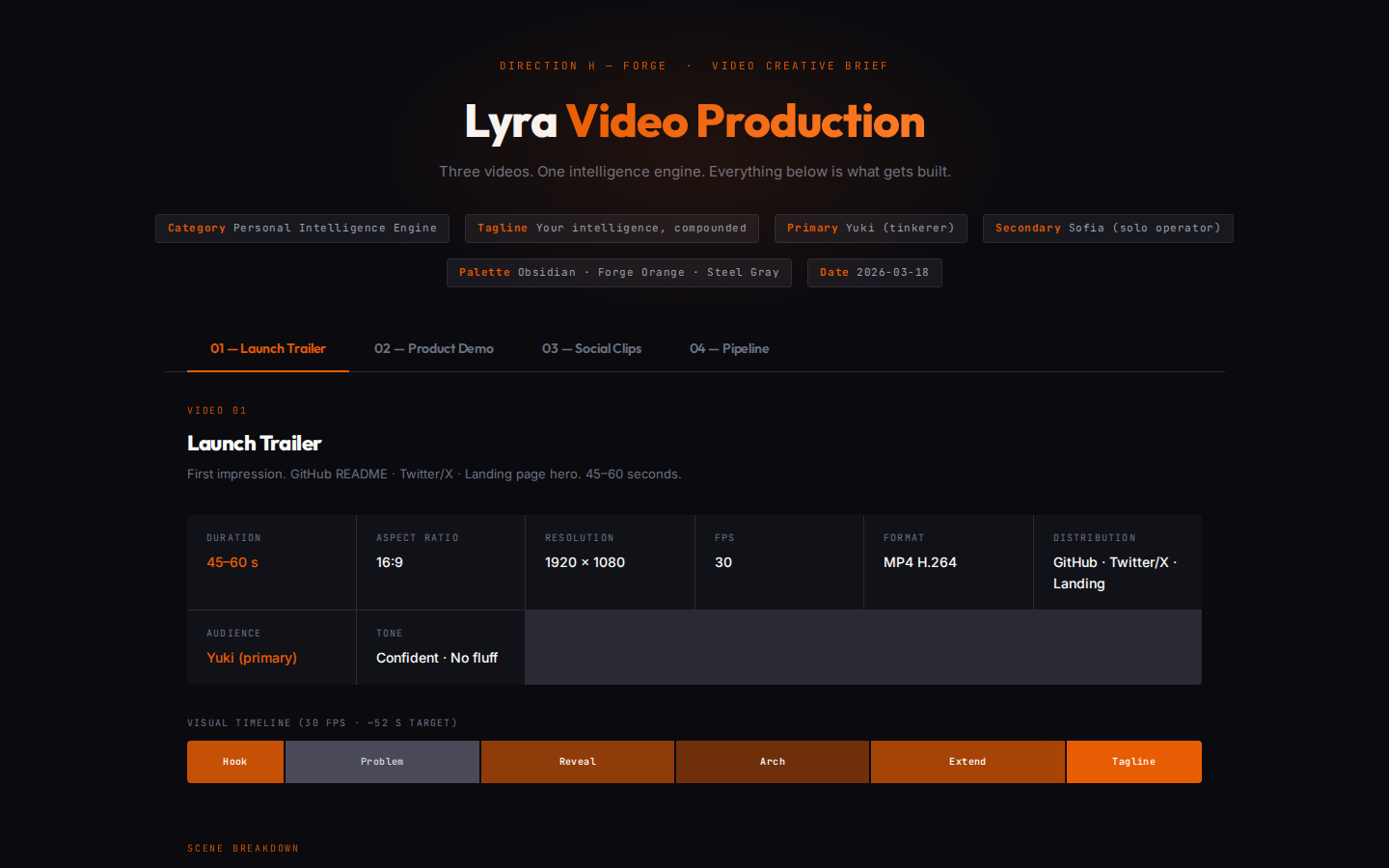

Remotion-based video production — talks, demos, animated explainers

Multiple seeds

Generate multiple examples with different seeds, pick the best

Speed is not an advantage if you lose visibility. And infra always ends up becoming a topic.

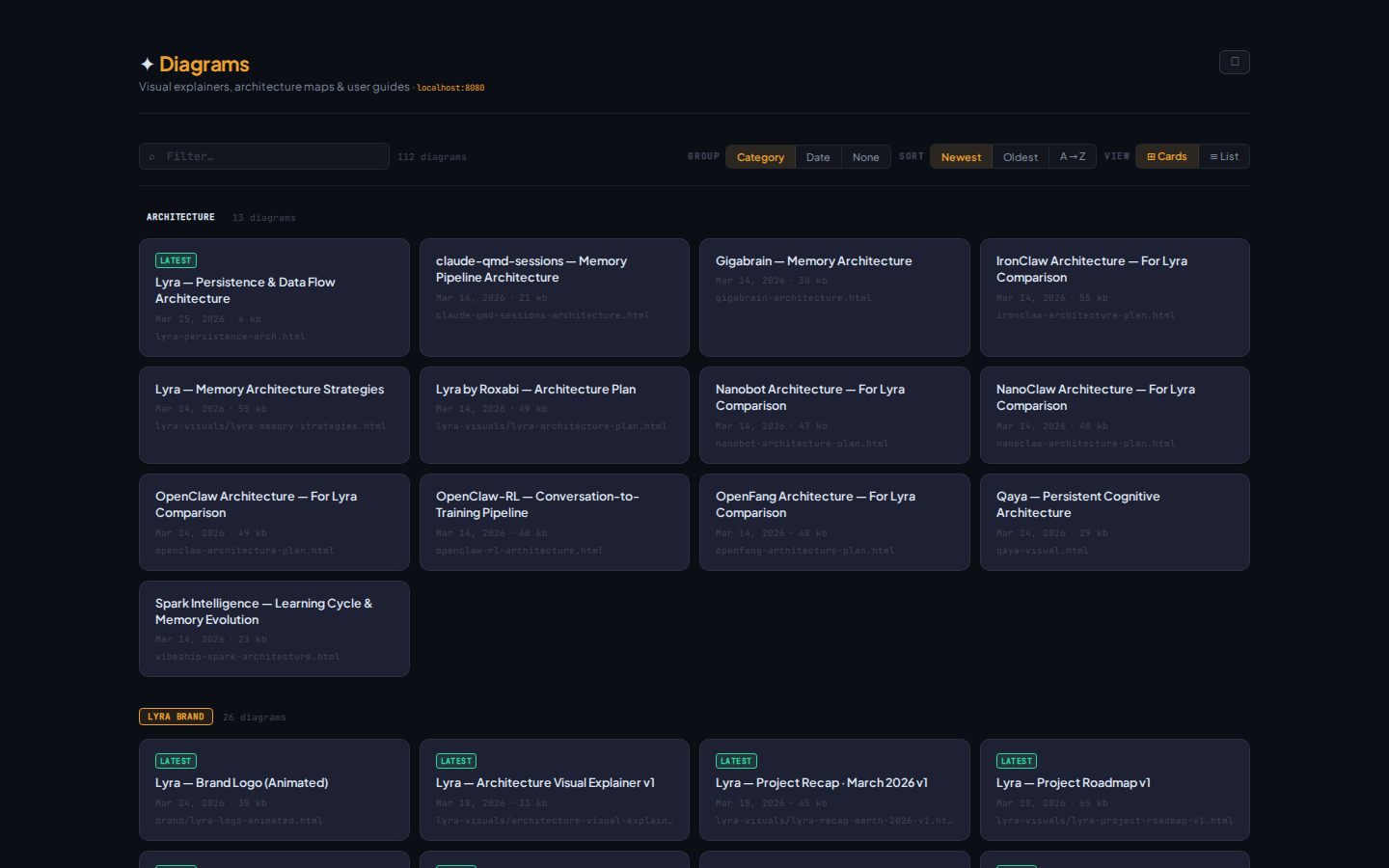

"make visuals" — your interactive library

$ make visuals

Scanning ~/.agent/diagrams/ …

hub-spoke.html

architecture

forgeflow.html

flow

mission-control.html

dashboard

benchmark-2026.html

analysis

symphony.html

architecture

deploy-pipeline.html

infra

One guide for every diagram — the agent reads it before generating. Consistent output, zero manual setup.

Generate → compare → pick the best

#42

clean lines

#137

bold contrast

#256

warm tones

#512

selected ✓

#789

high detail

Same prompt, multiple seeds — variations let you select the best result without re-prompting.

Visibility scales when you standardize the output. A guide + a command = a visual factory.

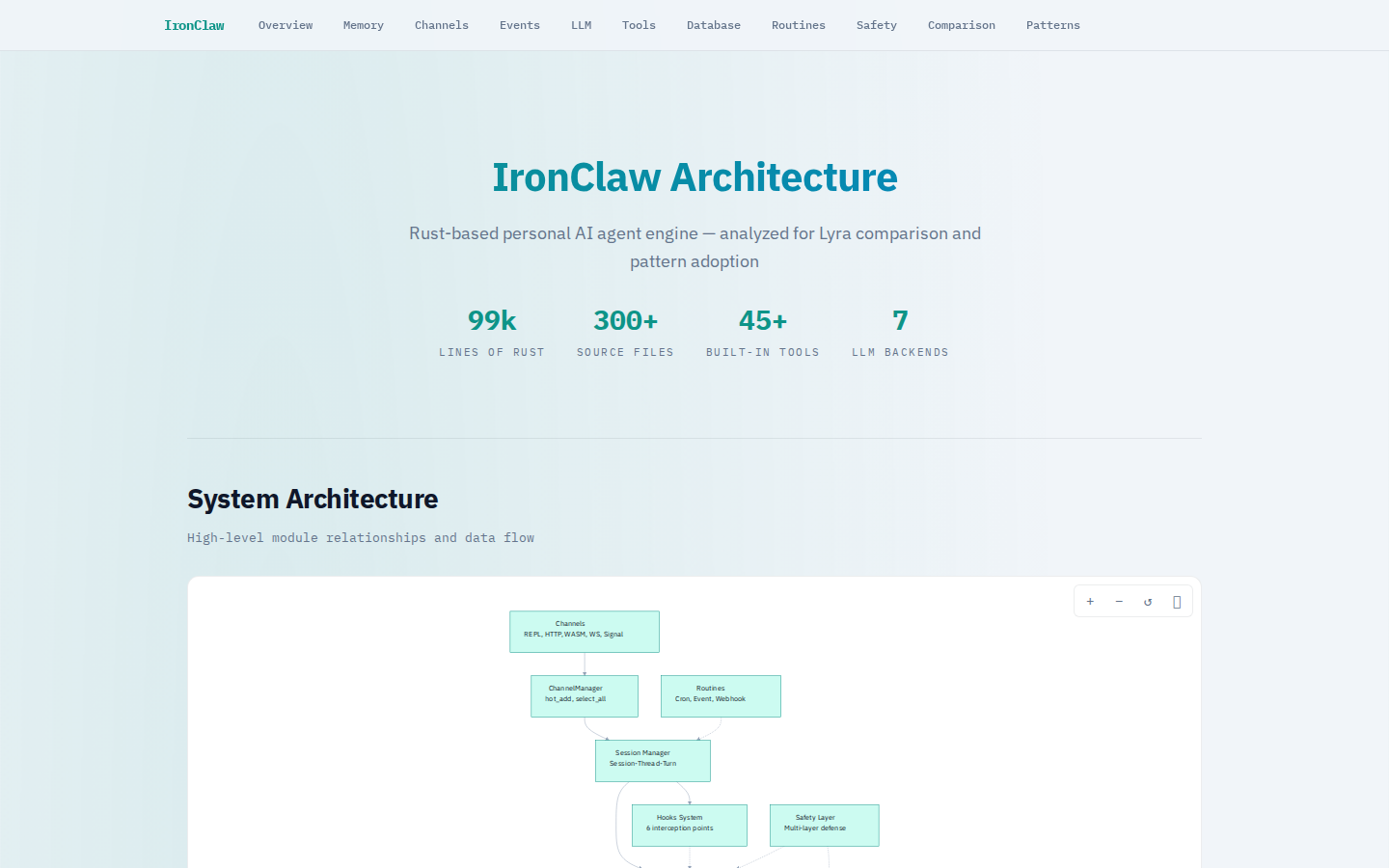

Reverse engineer everything

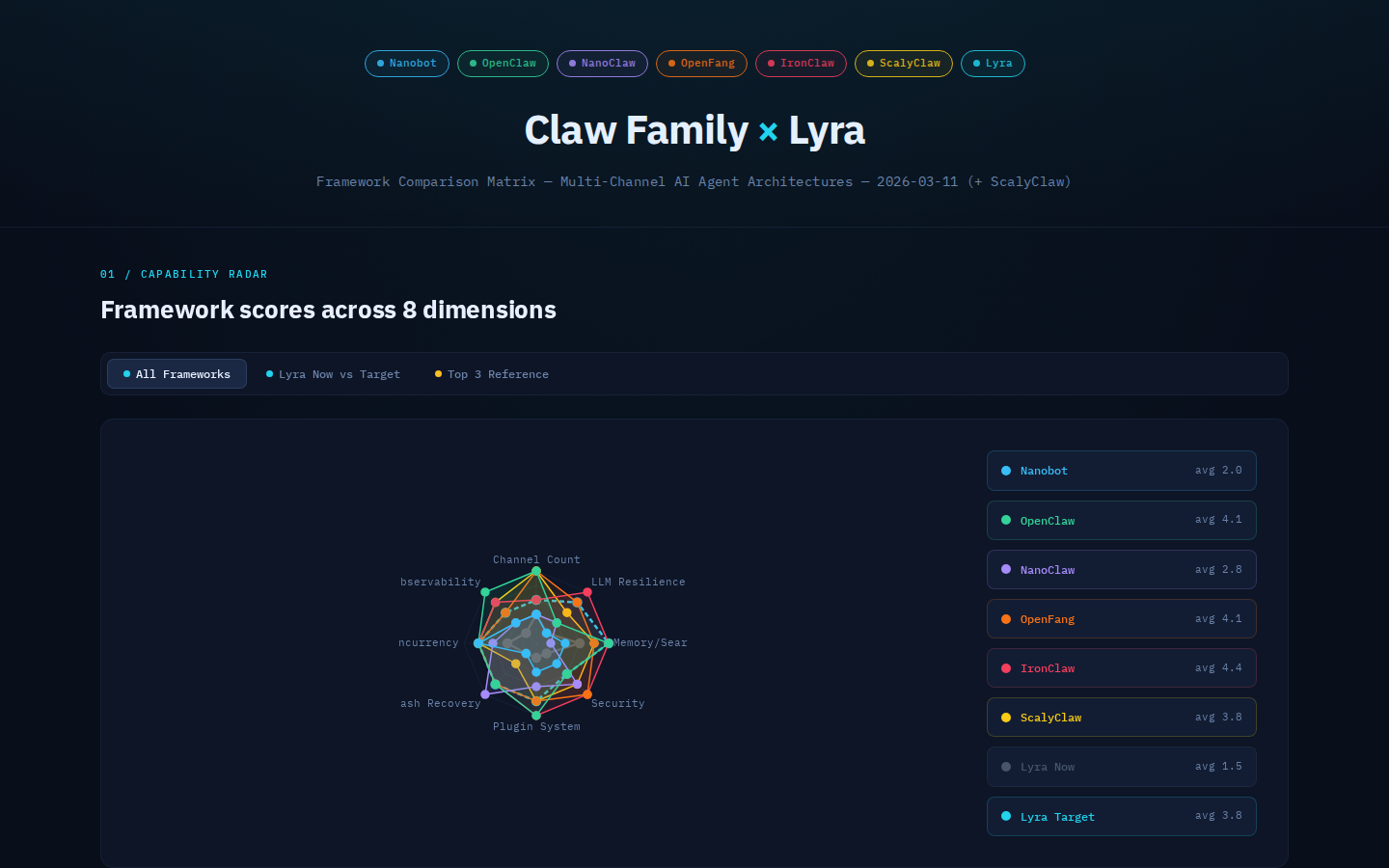

You don't build in a vacuum. You scrape, benchmark, compare, steal patterns. The best ideas come from deconstructing what already works.

The first Lyra presentation video was a 4/10 on production quality. Solution: scrape 10 top YouTube tech presentations, extract the patterns that work — pacing, cuts, typography, hooks. Apply. Iterate.

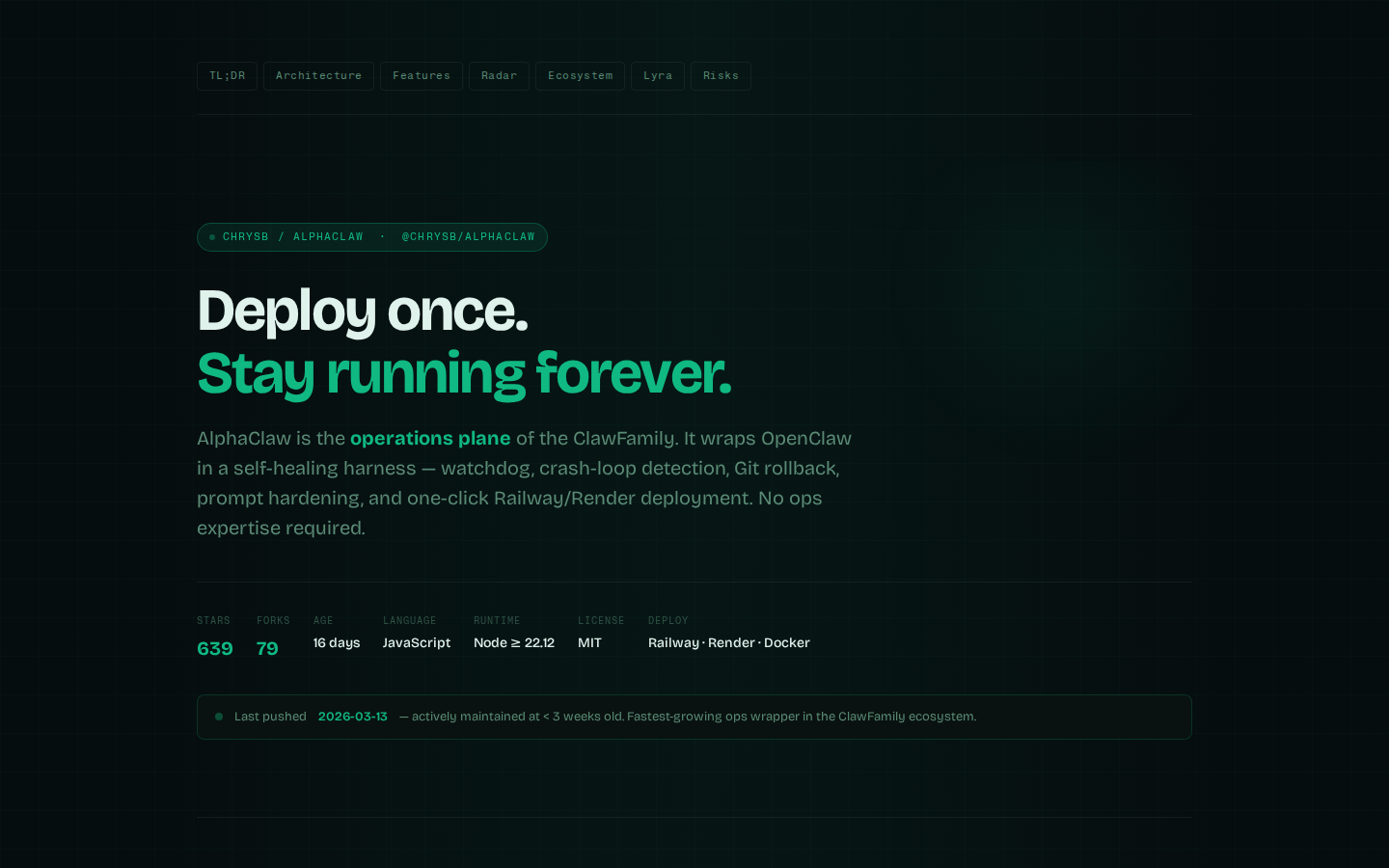

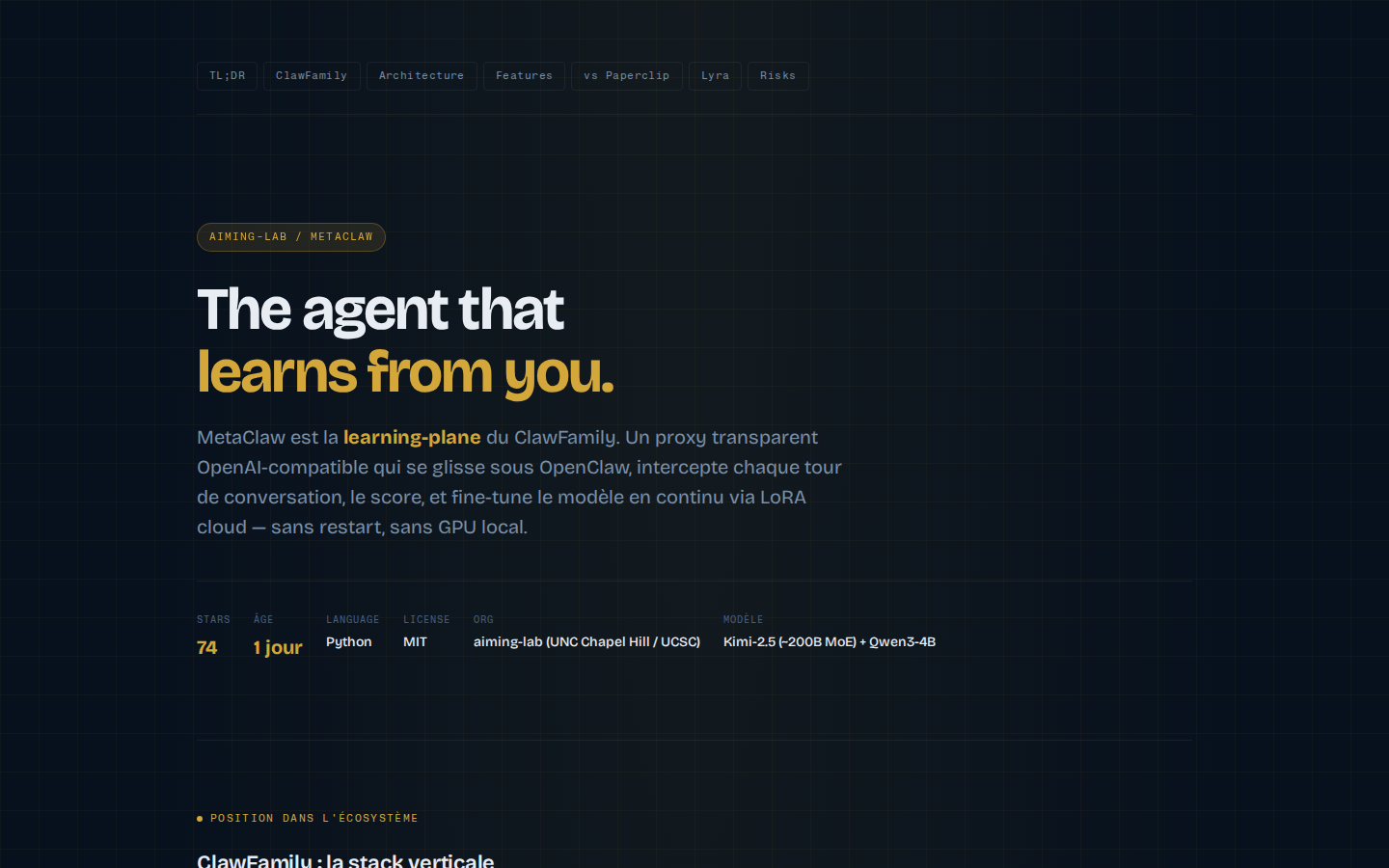

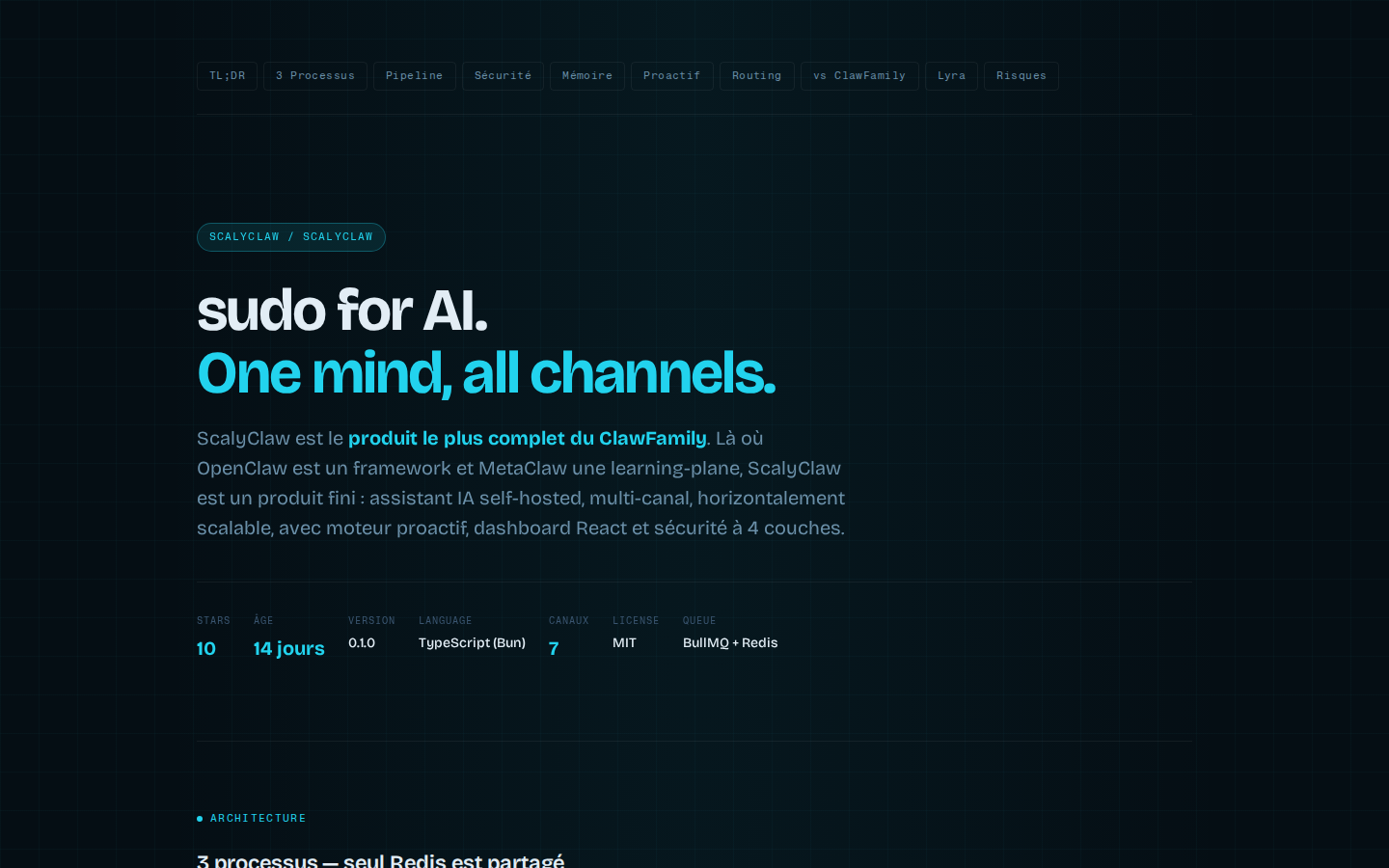

Lyra doesn't copy one agent framework — it borrows the best ideas from many. Each "claw" project is reverse-engineered to extract architectural patterns worth stealing.

The recon loop

01

Find 5–10 references in the wild

↓02

Scrape / analyze / deconstruct

↓03

Extract the patterns that work

↓04

Apply to your own context

The fastest way to get good isn't to invent — it's to study what already works, then adapt it.

breathe

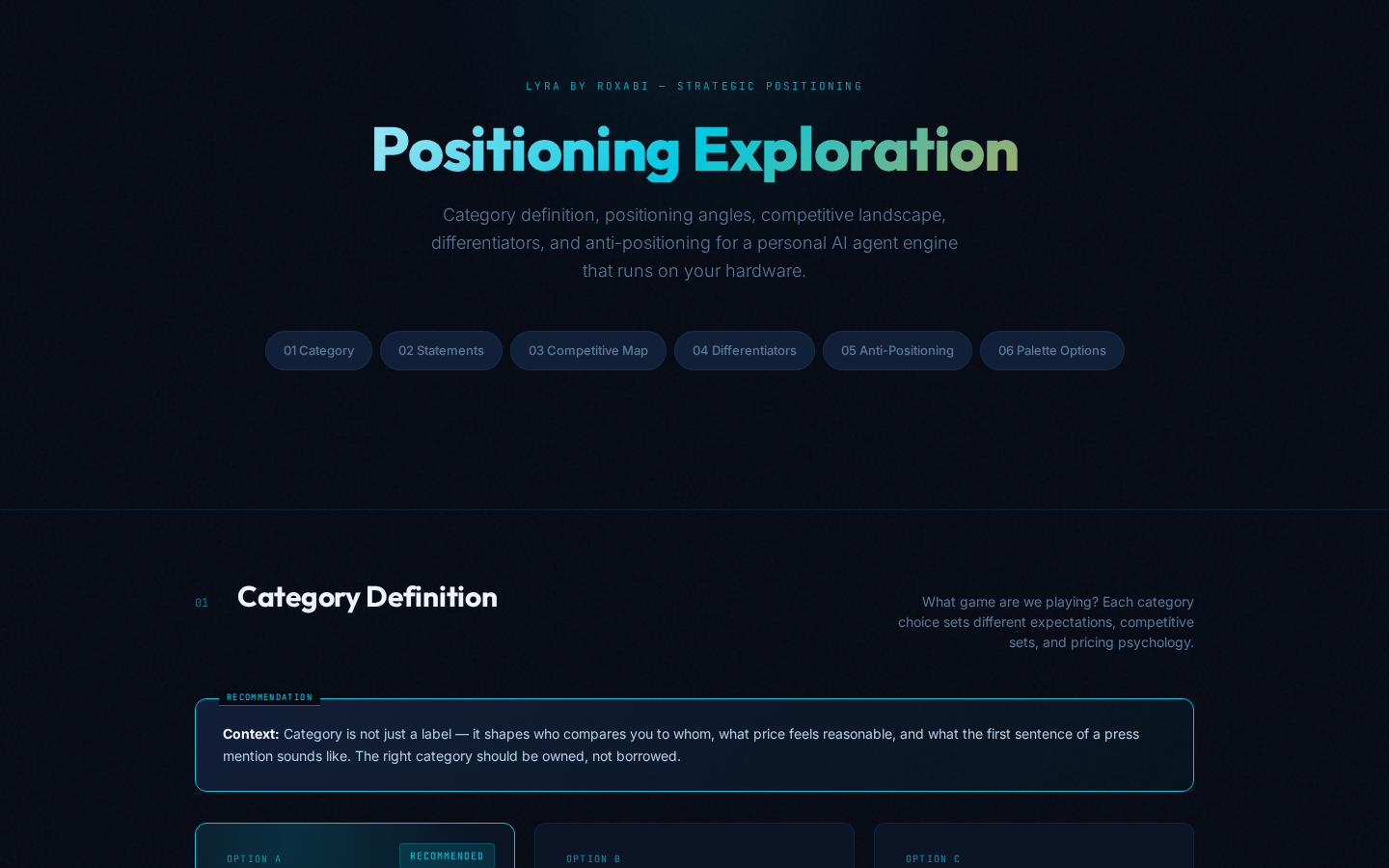

"The hard part is the product"

Tech pulls you in. You're in build mode, solving concrete problems, seeing progress. And you realize you haven't done what's supposed to be your domain of expertise.

Not done

Actual order

1. Stack technique

2. Outillage

3. Vitesse

4. Produit ← ?!

Ideal order

1. Produit

2. Stack technique

3. Outillage

4. Vitesse

The step you should have done first is the one you do last. The PM who didn't do their PM work.

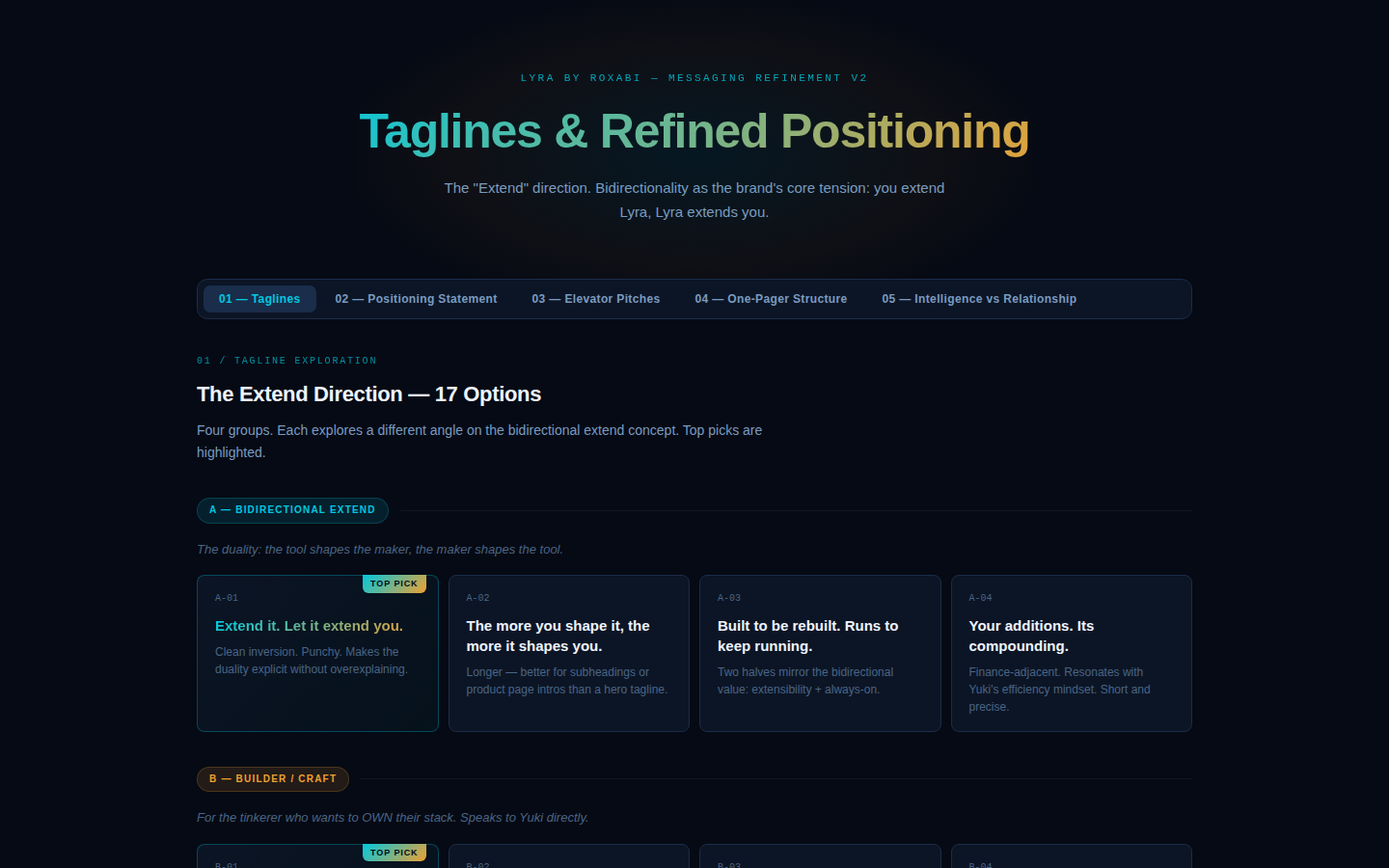

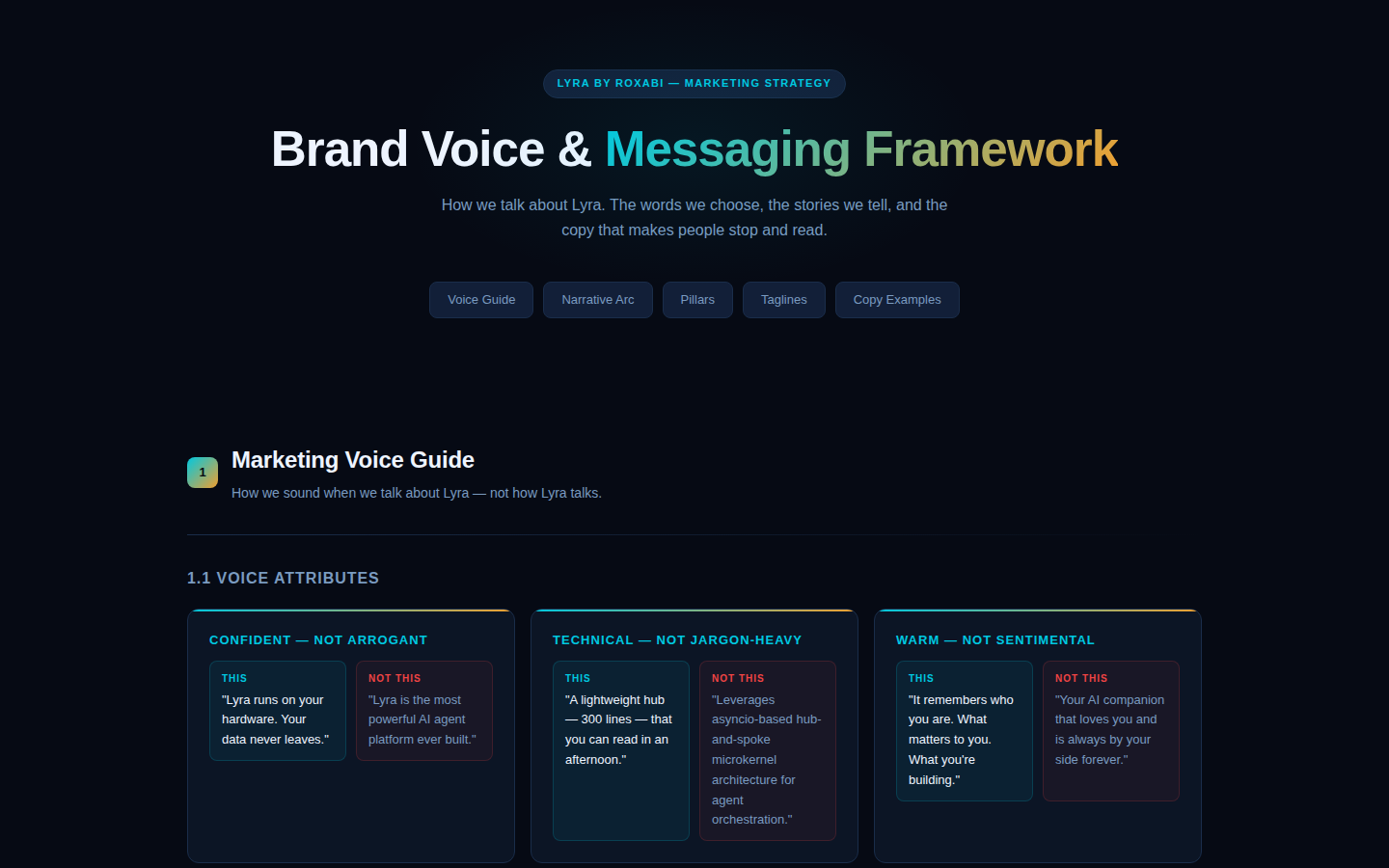

Don't lose the why

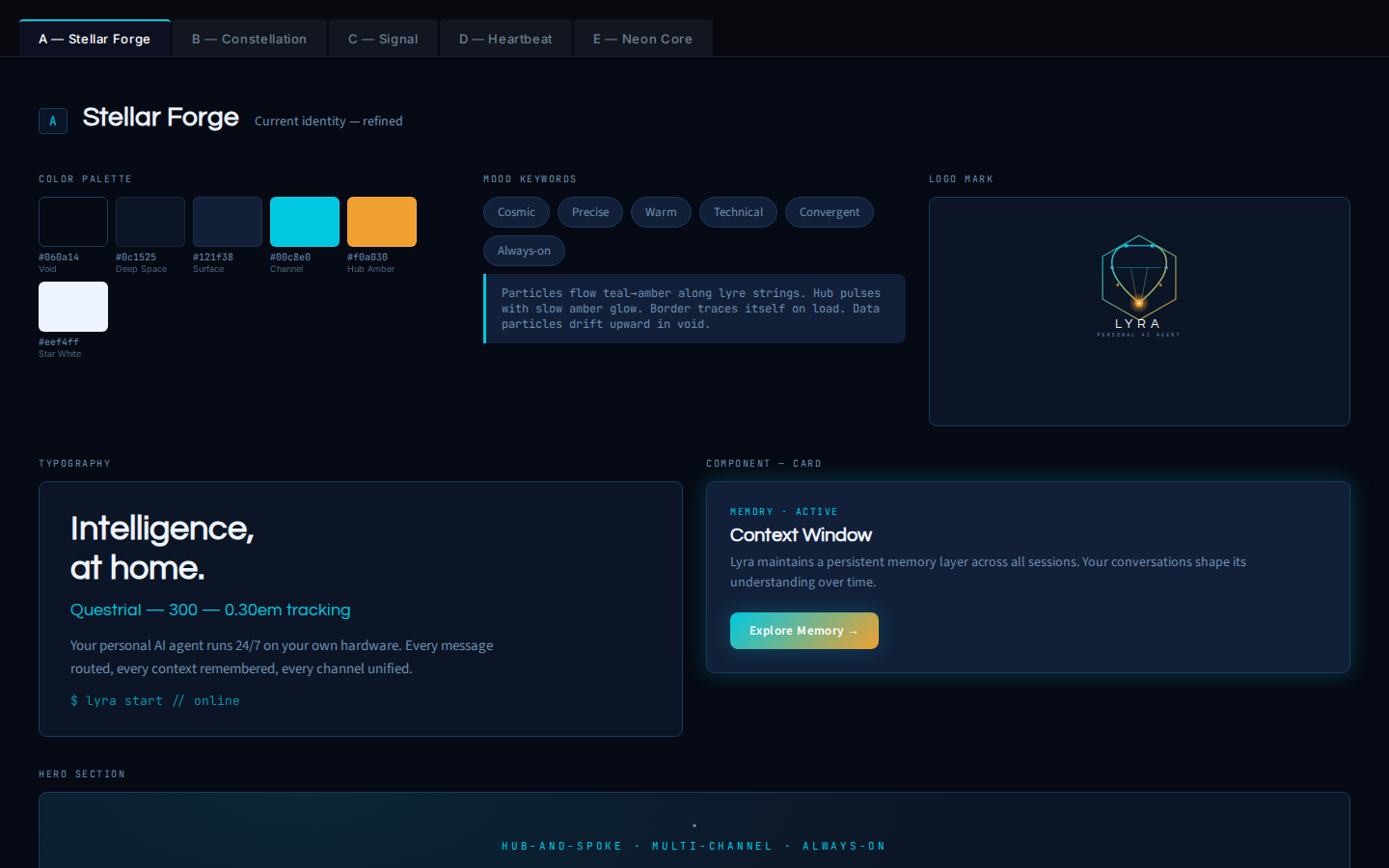

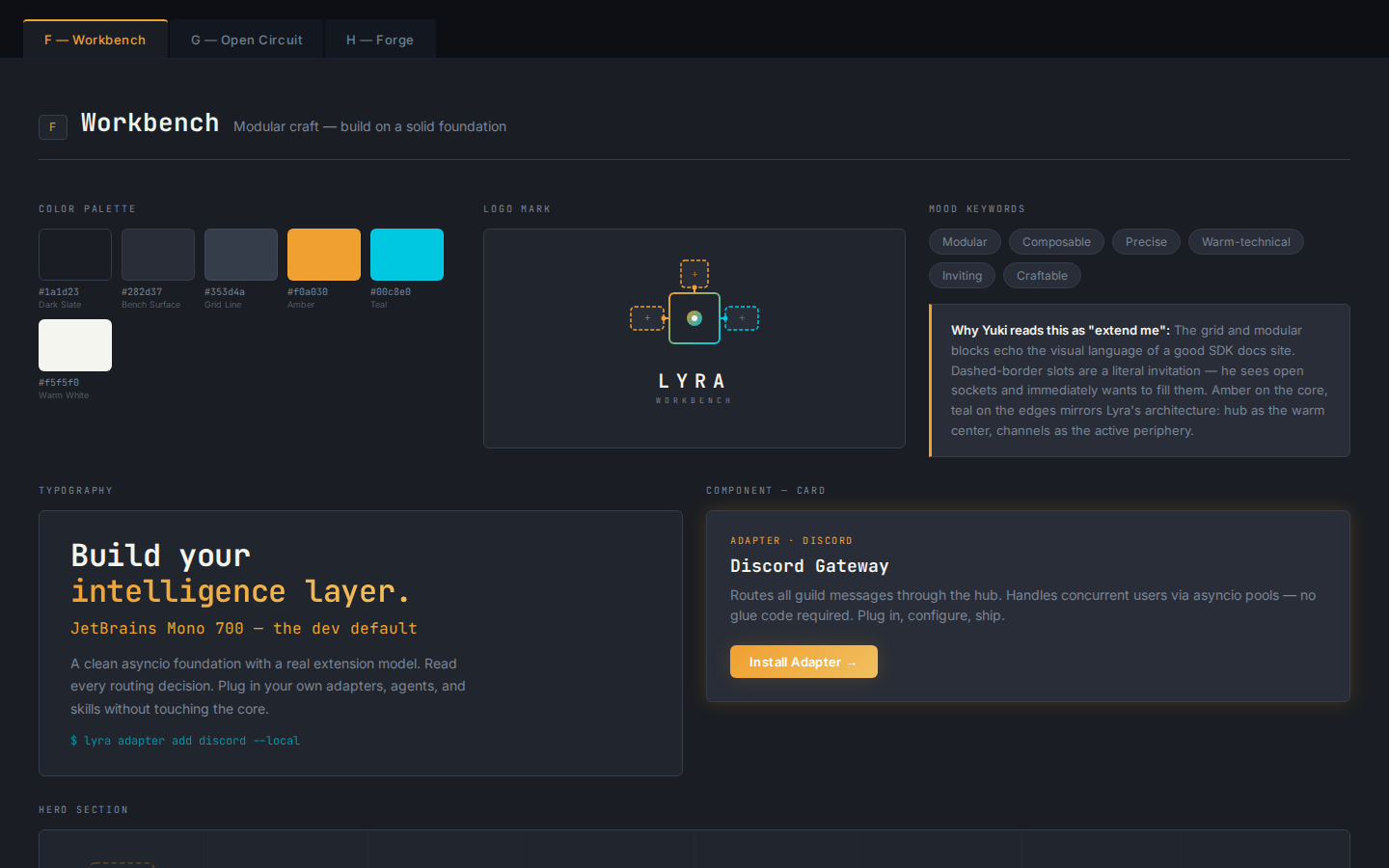

You need a playbook for brand, and methods for product. The question is when to use them — not whether.

Inventory everything before creating

Diverge — positioning, personas, visuals

Founder review, lock strategic pillars

Converge — voice, typography, messaging

Lock brand book, sign off

Logo, animation, handoff specs

Sub-variant funnel, kill list, polish

Brand video brief, Remotion pipeline

Agent-orchestrated, 4–8h of founder attention. Breadth first, then converge at decision gates.

Root-cause the real need

What job does the user hire this for?

Map the user journey before slicing

Why → Who → How → What

Must / Should / Could / Won't

Imagine it failed — why?

When to apply?

Frame → Before writing anything. Why are we doing this? For whom?

Spec → When slicing the solution. What's in, what's out?

Methods are not overhead. They're the cheapest insurance against building the wrong thing. The 5 Whys take 10 minutes — undoing a wrong feature takes weeks.

"The real hard part: steering without drifting"

The problem isn't building. It's keeping course. You spend long periods in tunnel mode. You don't step back. And you drift alongside the agent.

Altitude

Architecture, roadmap, prioritization

Detail

API, message format, edge case

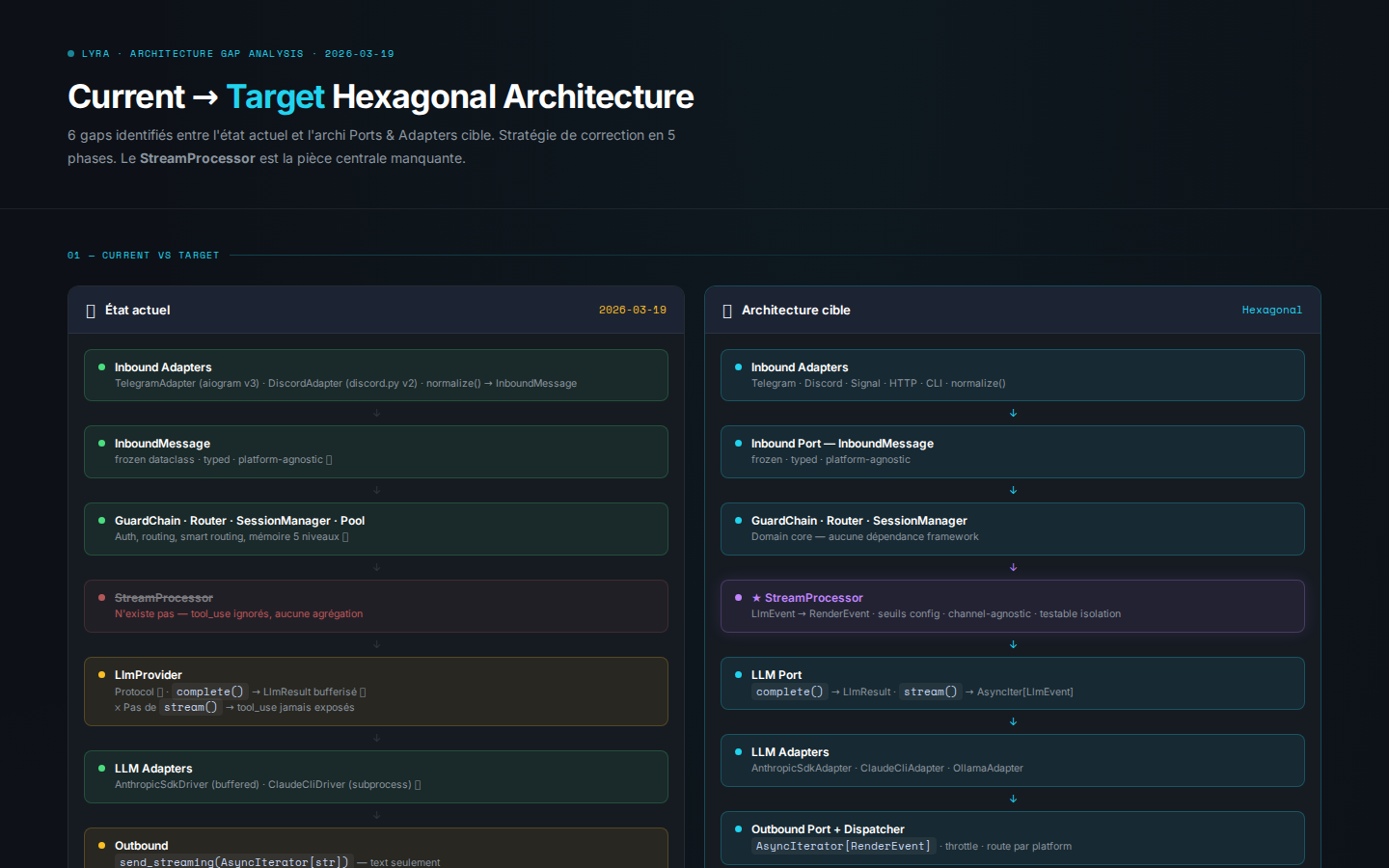

Lyra's hexagonal drift

W1–W3

W4–W6

W7–W9

You set up hexagonal architecture through your patterns

The agent introduces 'exceptions'. You don't see it.

Bugs pile up. Root cause: the architecture is no longer hexagonal.

You drift AT THE SAME TIME as the agent. Neither of you sees it.

You drift at the same time as the agent. That's the most dangerous part: neither of you sees it.

Recalibrate

Lyra refacto phases — real commits

core/ split into strict sub-directories

300-line cap enforced per file

LlmEvent / RenderEvent / StreamProcessor rewrite

545 commits on Lyra in 24 days — nearly half is refactoring

50%

Features

50%

Refactoring

Automated guardrails

Agents don't drift if the linter catches them first. Strict rules are your cheapest steering tool.

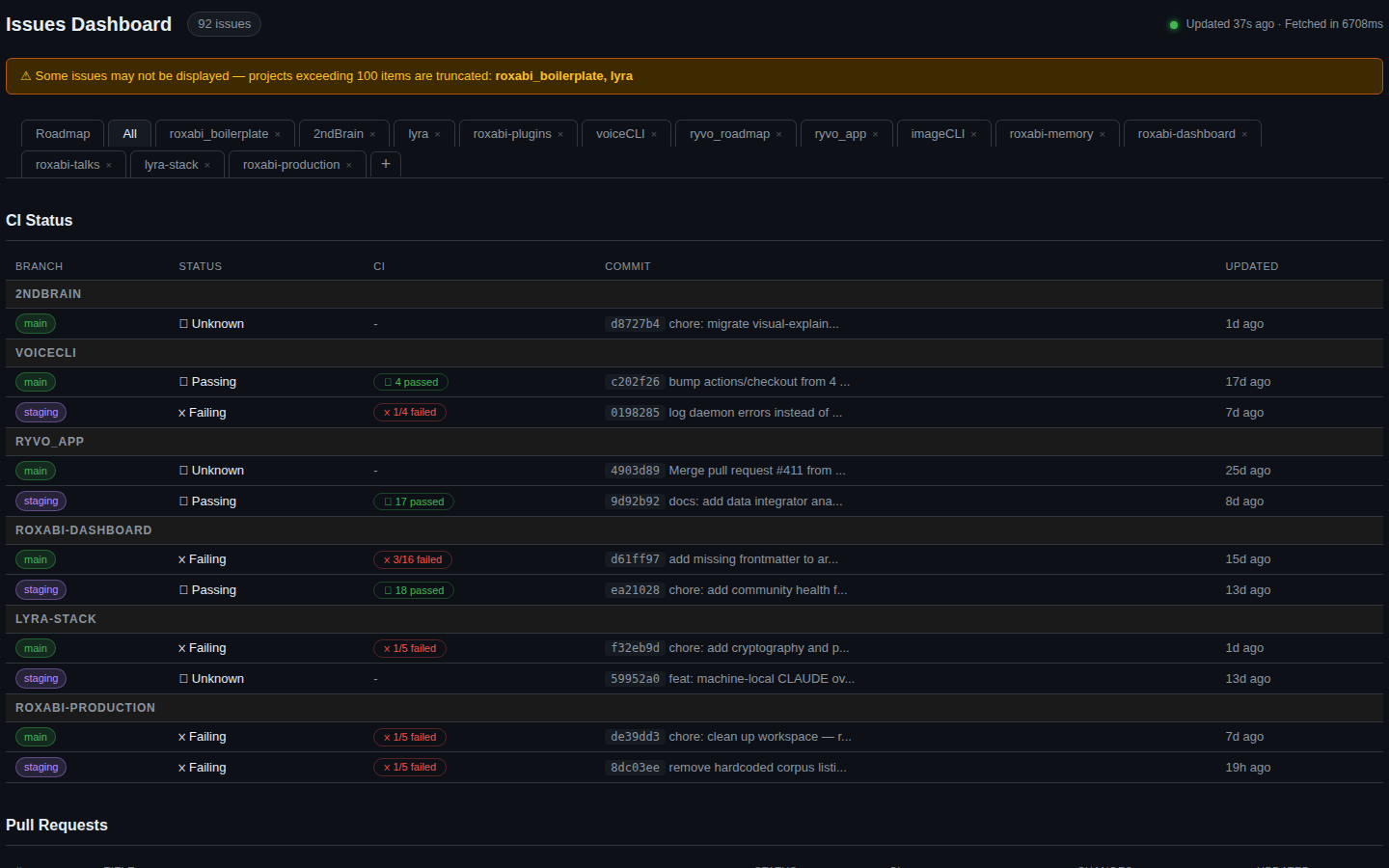

The steering pyramid

If it's red here, everything else waits

Green pipeline = permission to ship

Review queue — don't let it pile up

New work enters here, not above

If it's red at the top, you unstack. Don't create new at the bottom.

If it's red at the top, you unstack. Don't pile new features on broken foundations.

Test your product. Now.

Fast development cycles are useless if you never put your product in front of real users. Ship early, break things, learn fast.

The fast cycle

Build the smallest thing that works

↓Ship it to real users

↓Watch what they actually do

↓Fix what matters, cut what doesn't

↻Good practices at speed

CI/CD from day 1

If it's not in prod, it doesn't exist. Automate deploy so shipping is free.

Feature flags, not branches

Ship dark. Toggle on for 10% of users. Watch. Decide.

Metrics before opinions

You don't know what users want. You think you do. Measure instead.

Kill your darlings

That feature you spent 3 days on? If nobody uses it, delete it.

The biggest waste isn't bugs or tech debt — it's building something nobody asked for.

What I'd do differently

Starting fast and iterating has its virtues. But the ideal sequence exists, and now I know it.

Before code

Product first

Personas, positioning, visual identity before the first line of code.

/init from day 1

Plan for plumbing. Use a standardized scaffold.

The .md / visual split from day 1

.md files for agents, HTML visuals for your decisions.

During build

Tooling = 30% of time

Every hour invested here saves ten later.

2 machines from the start

Never risk your dev env with a model that crashes.

The exploratory approach

Generate 10 variants, pick 3, iterate. The key is comparison.

Ongoing

Architecture checkpoints

A structural audit every 2-3 days.

The 50/50

Protect refactoring time. It's structural, not optional.

Steering dashboard

WIP limit applied to the ecosystem. Know when to unstack.

Agents are not algorithms

The next shift isn't technical. It's cultural. How we think about AI agents changes everything about how we work with them.

You expect deterministic output. You micromanage every line. When it makes a mistake, you feel betrayed. You treat the agent as an extension of yourself.

Give it a frame and an objective. Accept its output quality level. Don't verify every detail — manage like a leader manages a team.

The identity trap

When the agent is "you", criticism of its output feels personal. When the agent is a separate entity, feedback becomes constructive. This is the same dynamic as a manager whose team's work is critiqued.

"Does this agent, in this context, with this verification level, help me reach my objective?"

The cultural shift: from "AI as perfect tool" to "AI as managed collaborator". Not an algorithm. An entity.

Your intelligence, compounded.

I don't regret starting with the tech — it gave me velocity. But each phase taught me a lesson I could have learned earlier.

Mickael — Roxabi